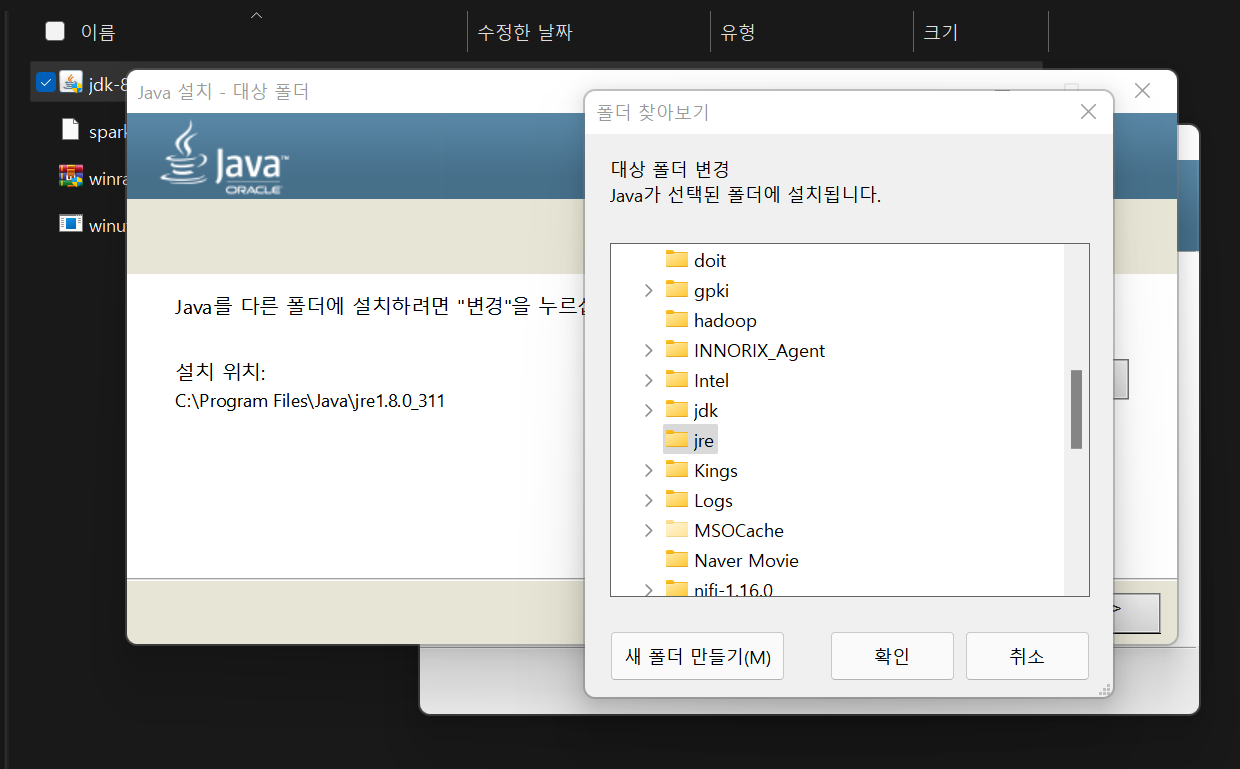

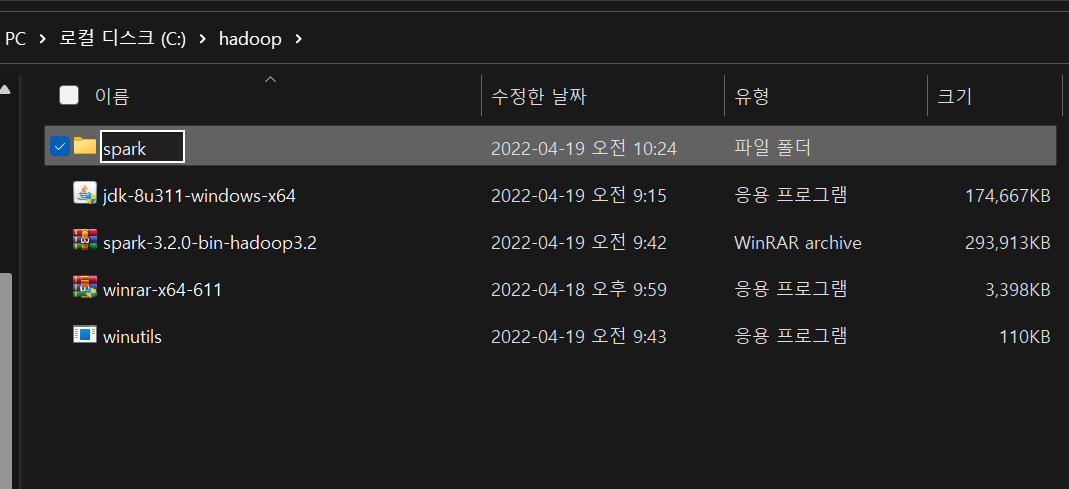

Step 1. Install Java DK

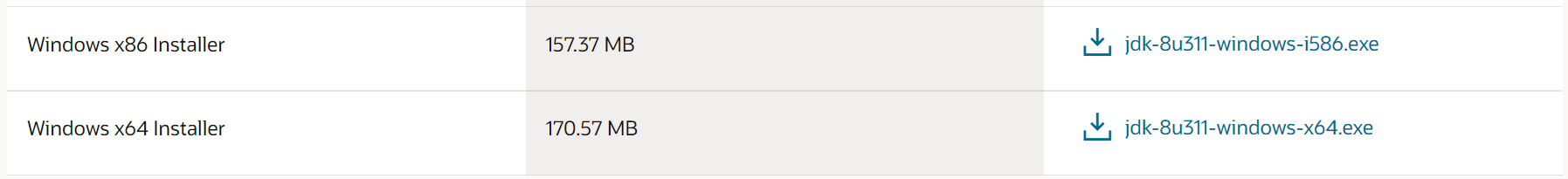

Download Windows Installer.

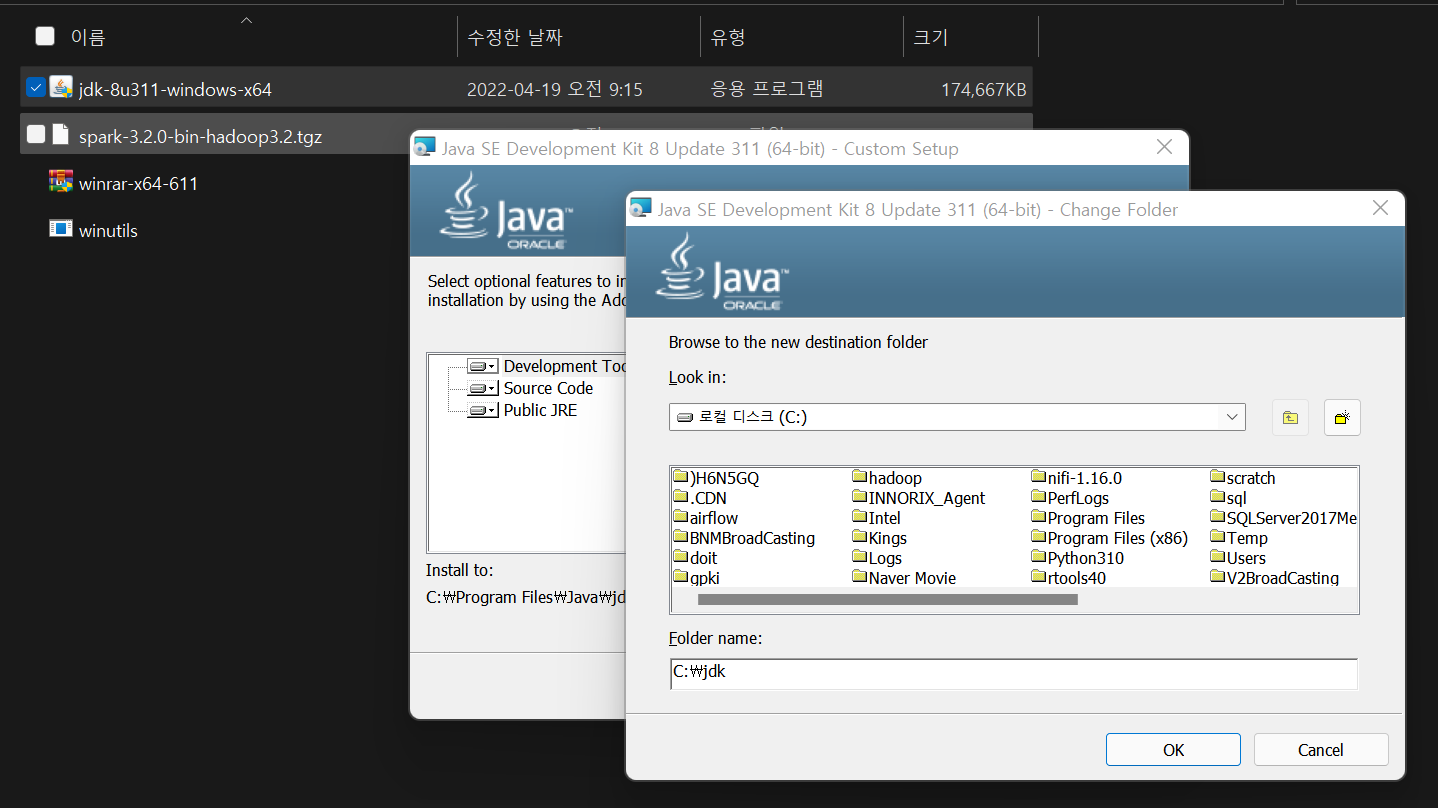

Run the download file as an administrator. Modify the path as shown in the picture below. (Be careful not to include spaces in the path name.)

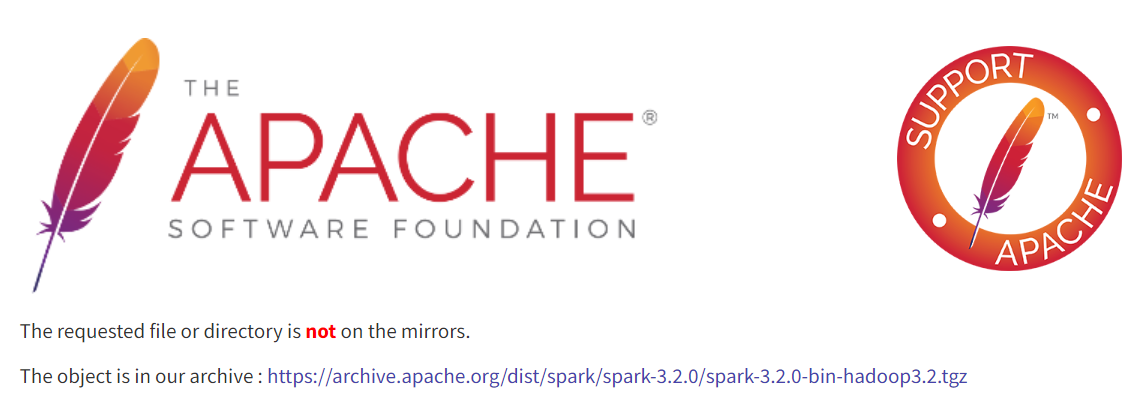

Step 2. Install Spark

Download Spark

.tgzfile. (Click the link in the images below.)

Download WinRAR to unzip the

.tgzcompressed file, and run as an administrator.

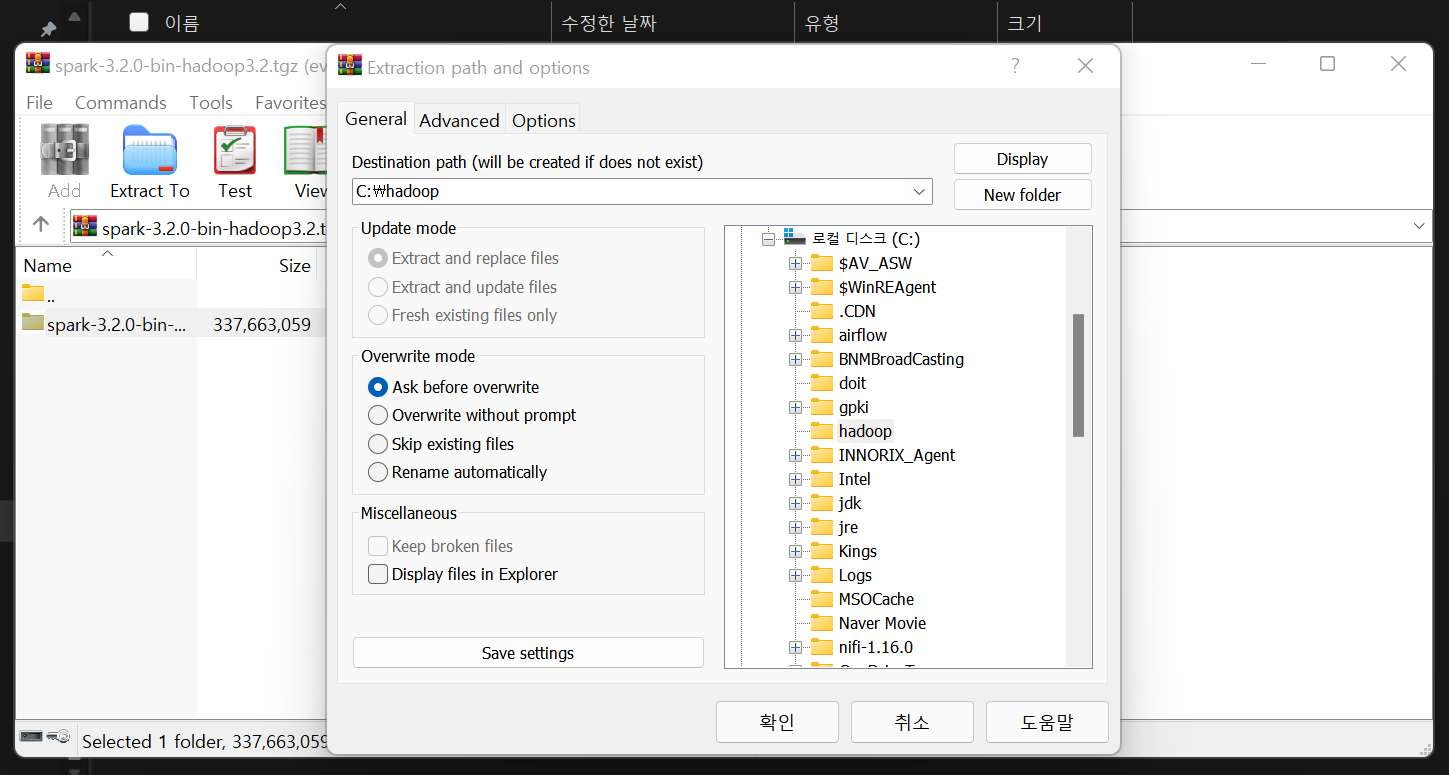

Open the Spark file with WinRAR and extract to the folder.

Rename the folder to spark, and copy and paste under the C drive.

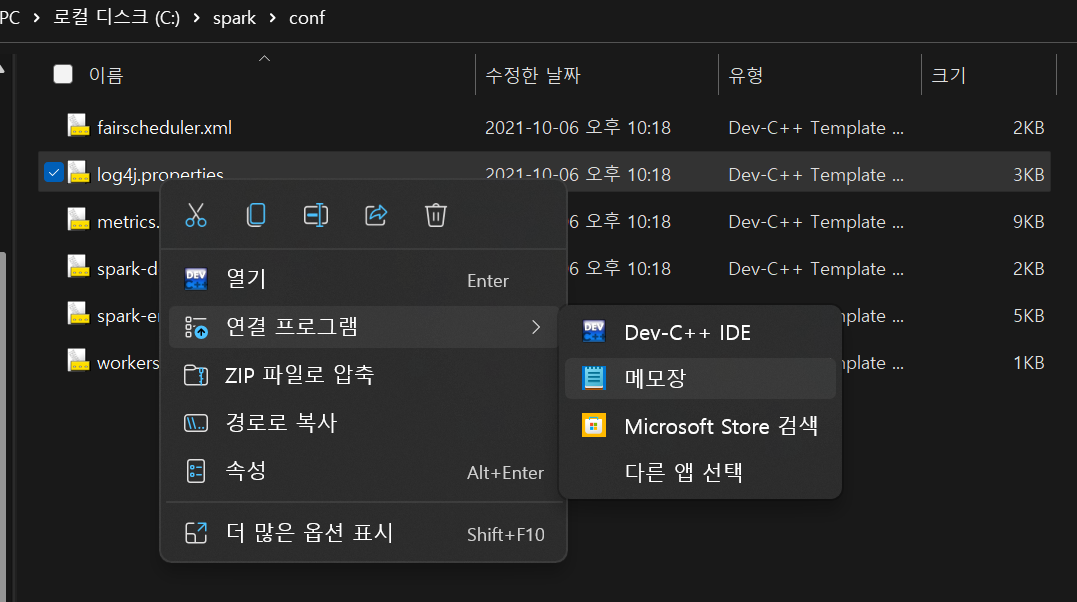

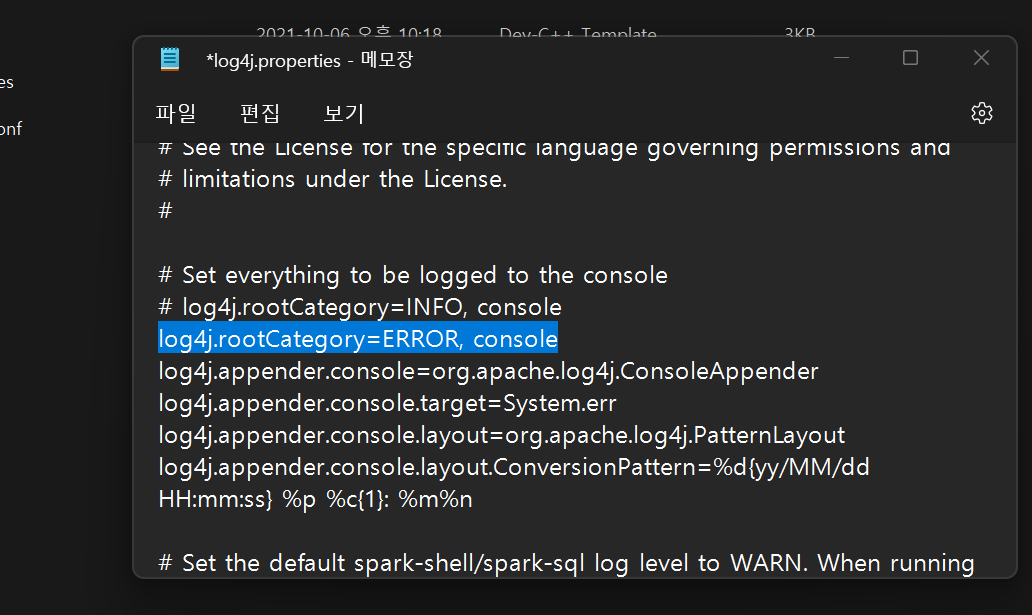

Open

spark\conf\log4j.propertiesfile with memo pad, and change the log4j.rootCategory value fromINFOtoERROR.

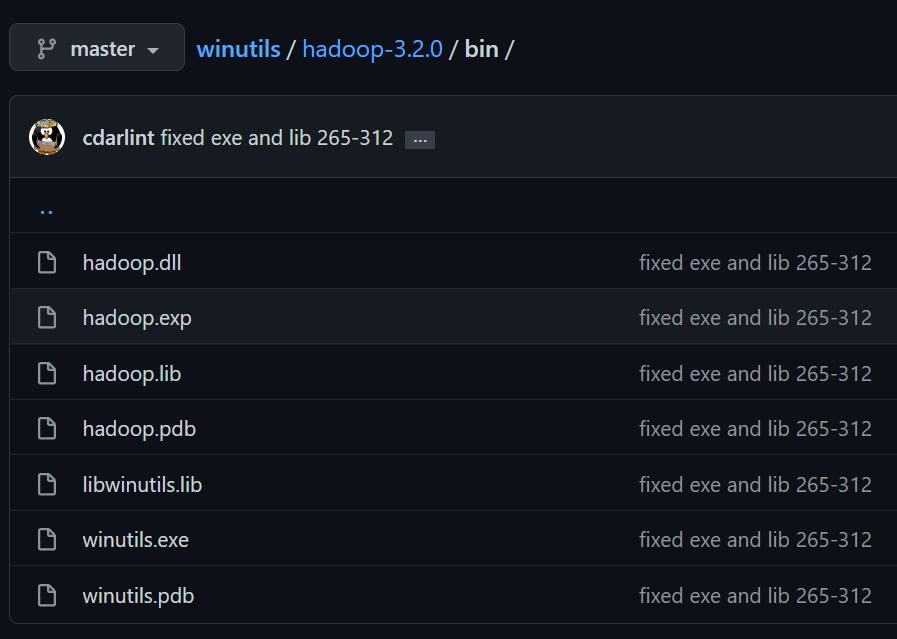

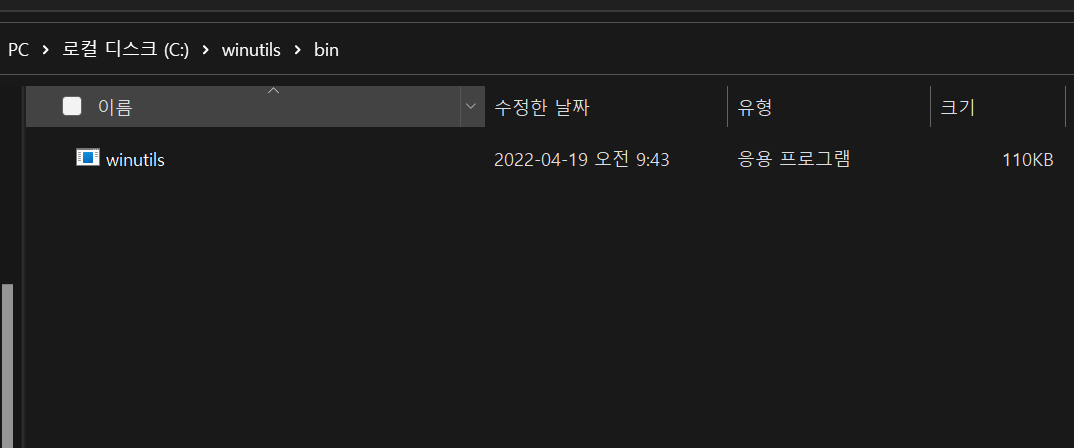

Step 3. Install Winutils

Download winutils.exe. (Check the version of Spark.)

- URL : ‣

Create a foler winutils\bin, and copy and paste winutils.exe.

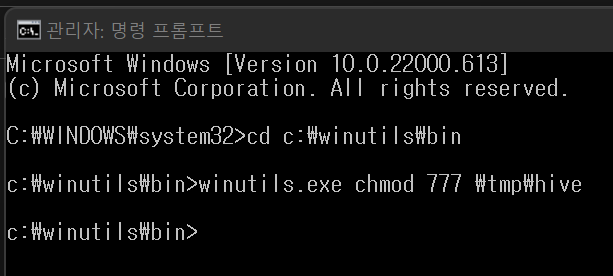

Run a CMD as an administrator, and write the code below.

1

2

3

4> cd c:\winutils\bin

> winutils.exe chmod 777 \tmp\hive

****ChangeFileModeByMask error (2): ??? ??? ?? ? ????.If the above error occurs, create the tmp\hive folder under the C drive and run it again.

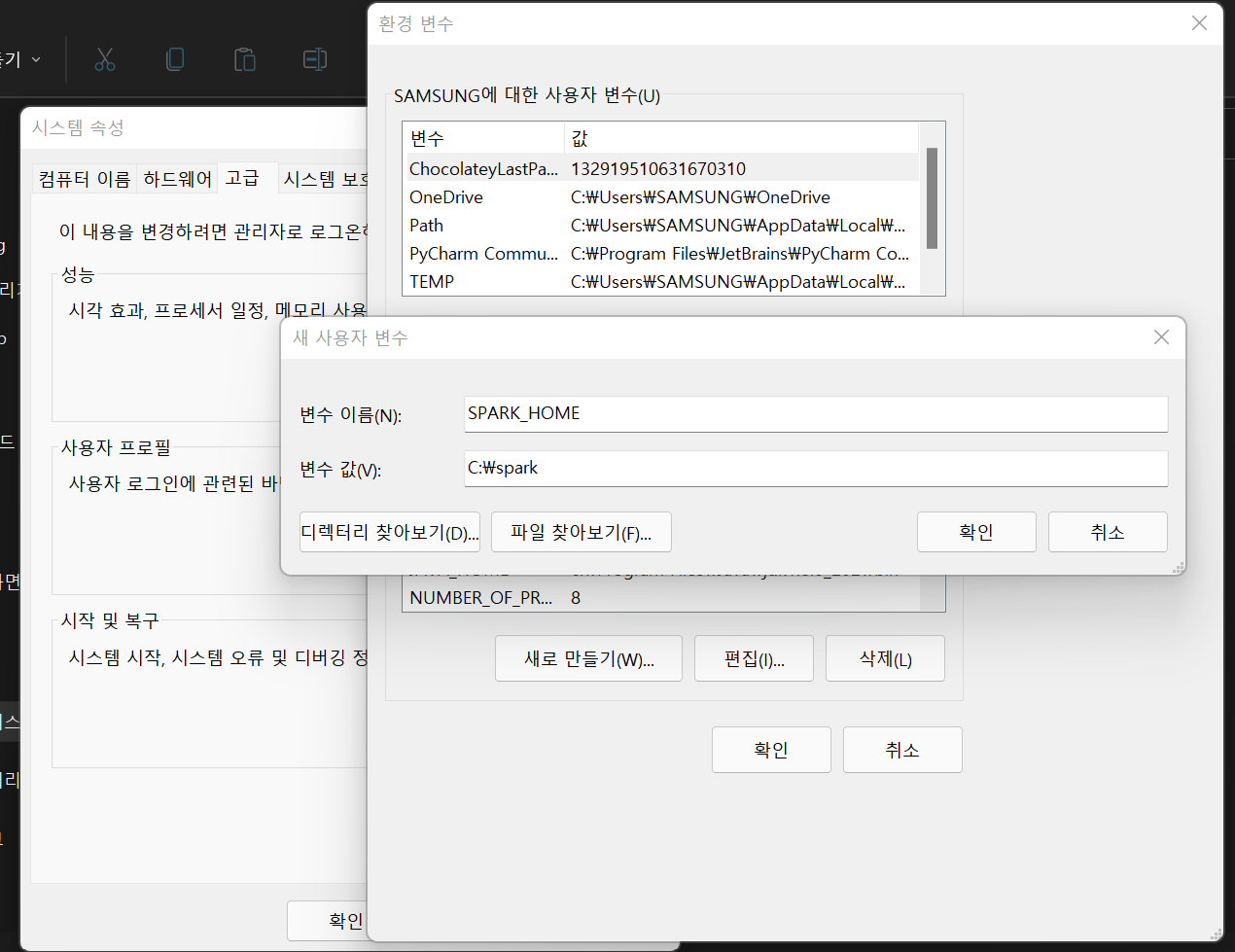

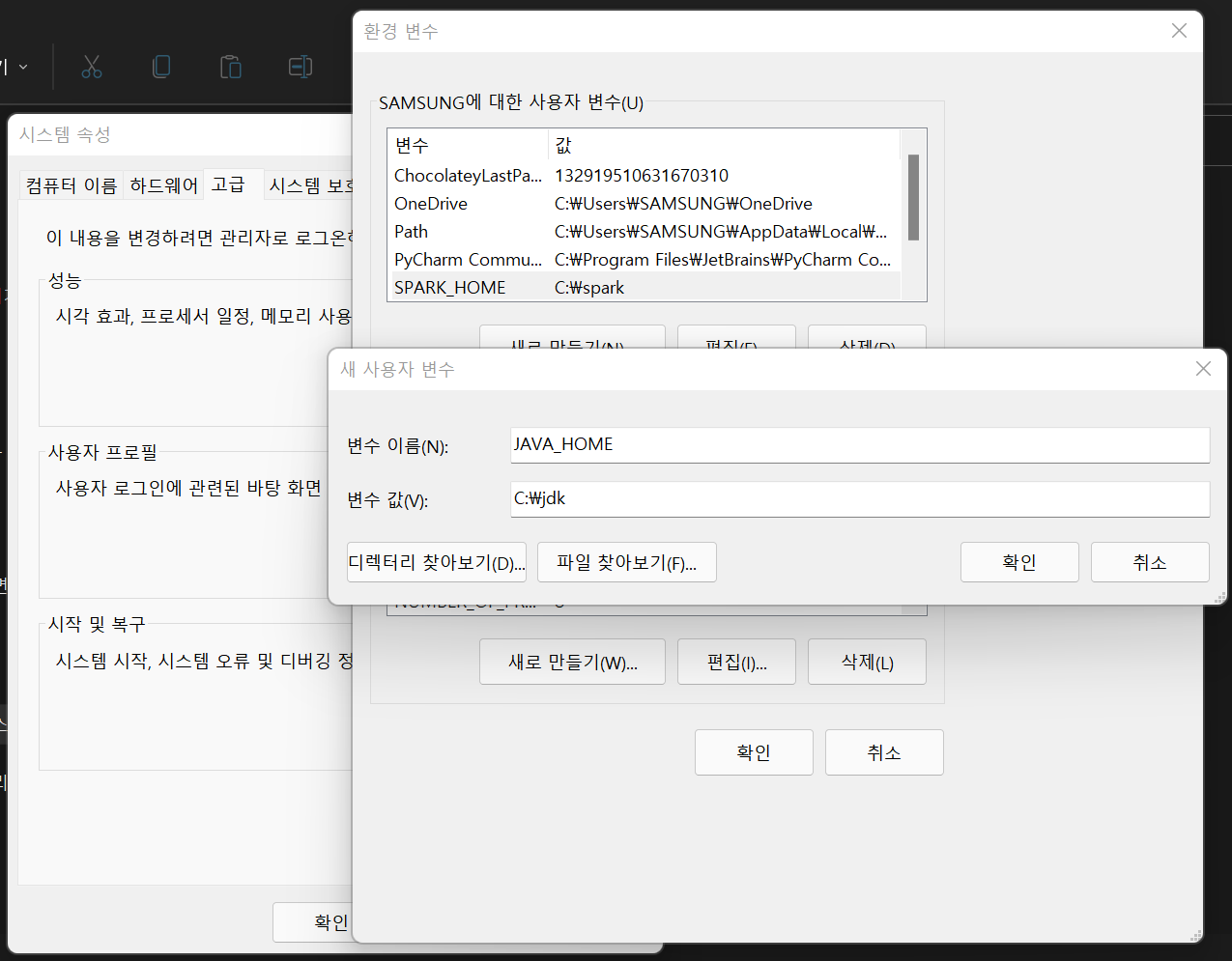

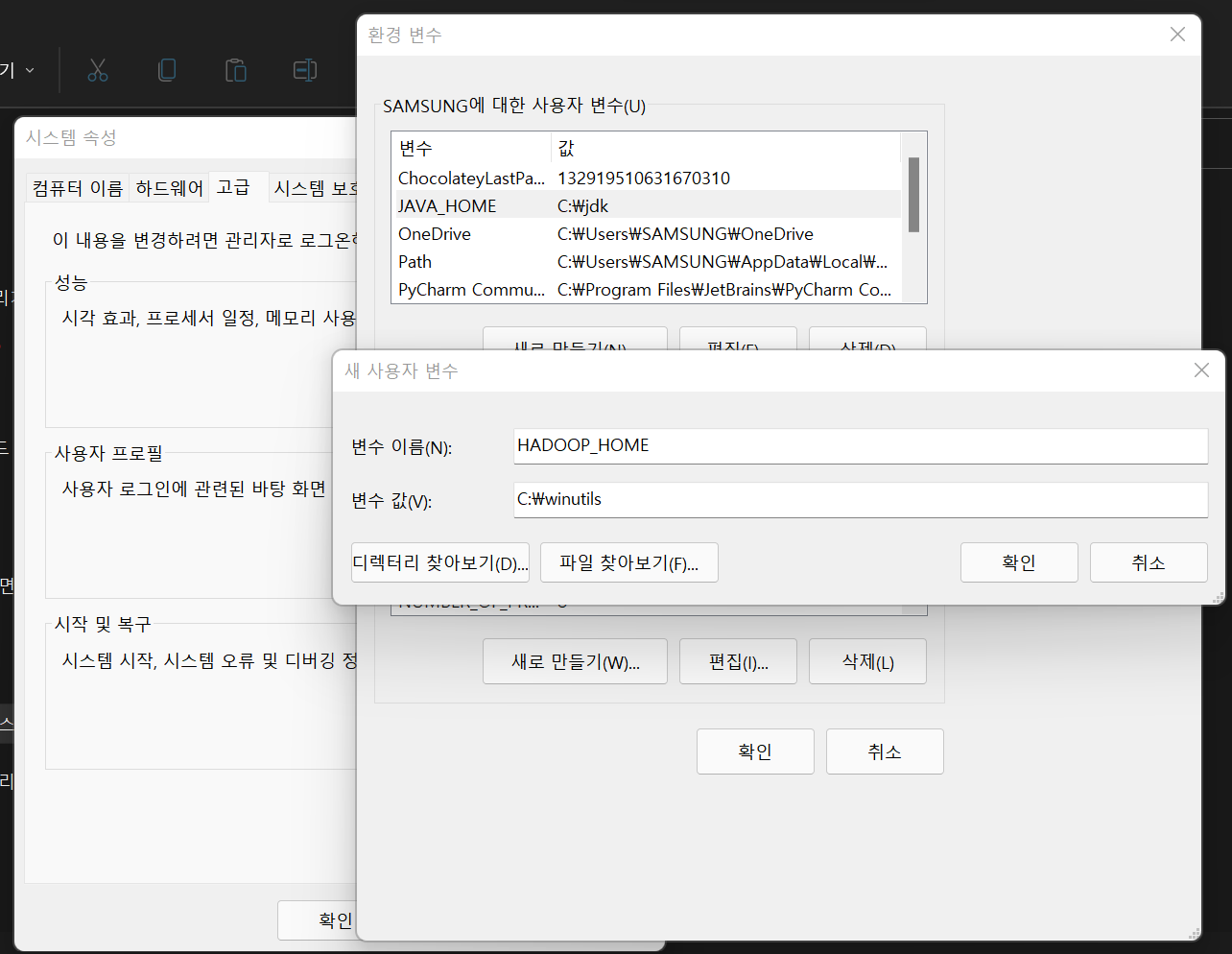

Step 4. Setting environment variables

Create a new user variable SPARK_HOME, and set the value as the path of spark folder.

Create a new user variable JAVA_HOME, and set the value as the path of jdk folder.

Create a new user variable HADOOP_HOME, and set the value as the path of winutils folder.

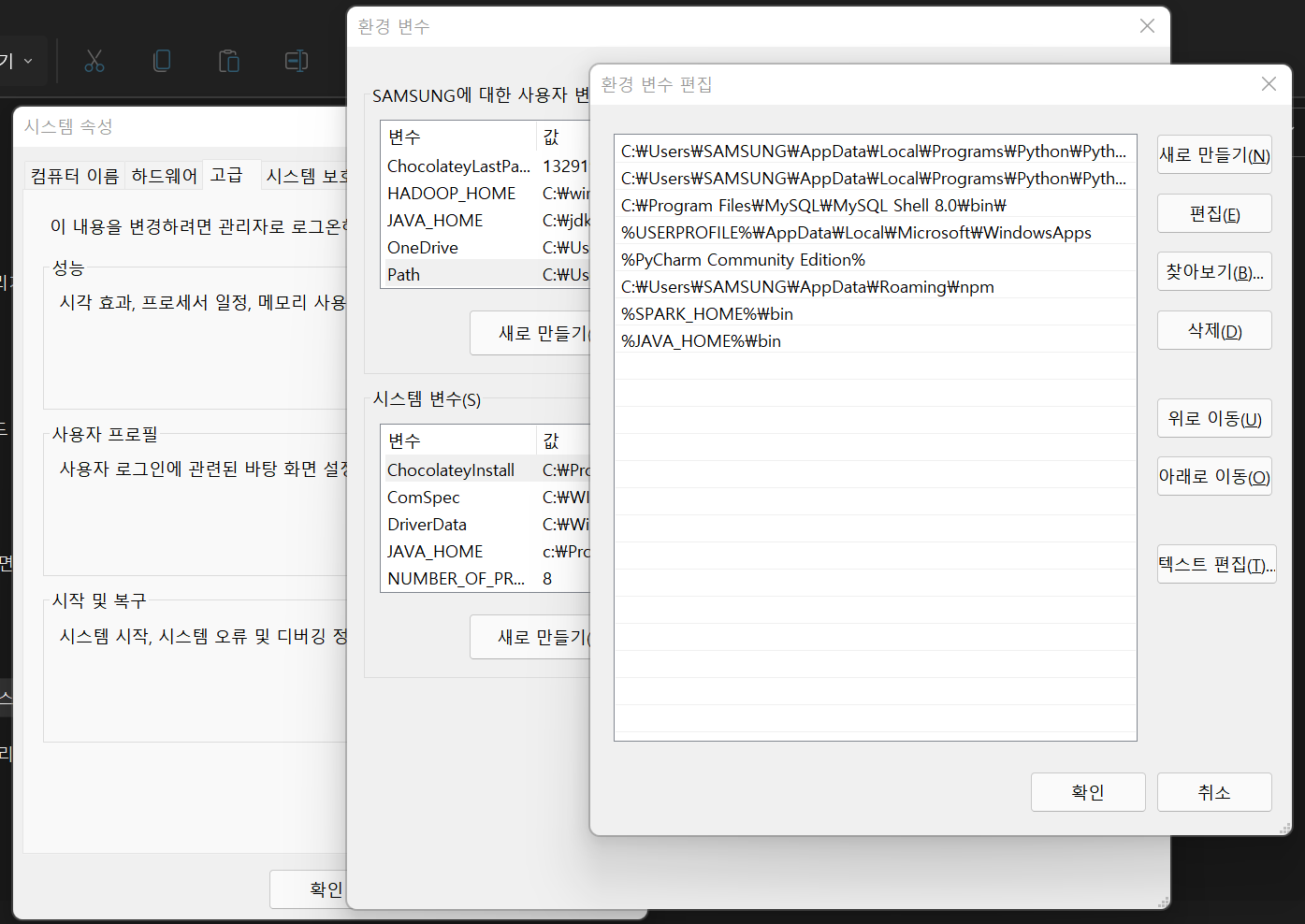

Edit the Path variable

- Insert

%SPARK_HOME%\binand%JAVA_HOME%\bin.

- Insert

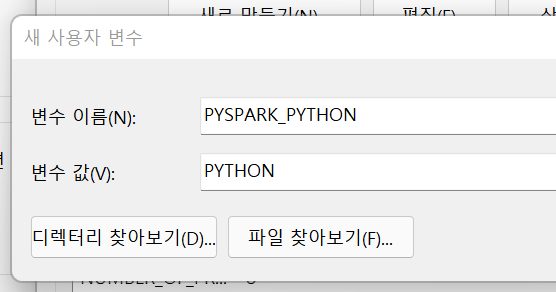

Create a new user variable PYSPARK_PYTHON, and set the value as PYTHON.

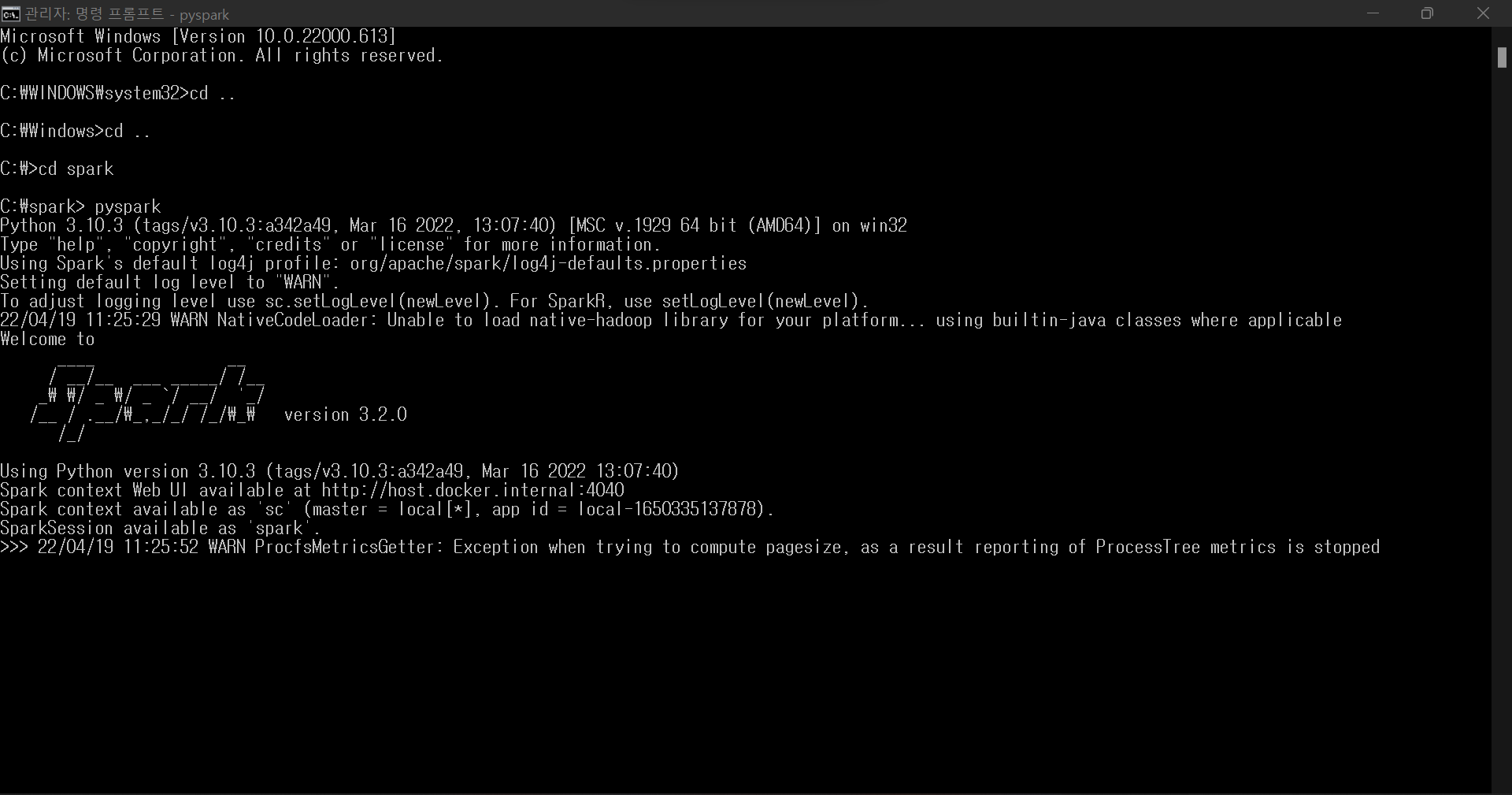

Run a CMD as an administrator, and run

pysparkin the c:\spark path.

Run the code below in the CMD and check the result printed.

1

2

3

4> rd = sc.textFile("README.md")

> rd.count()

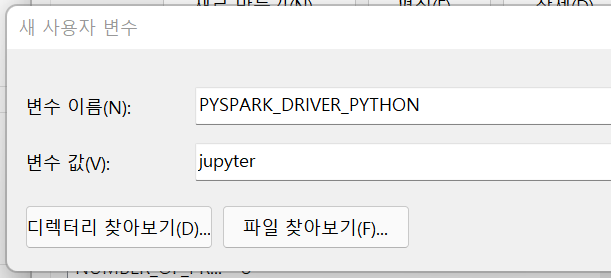

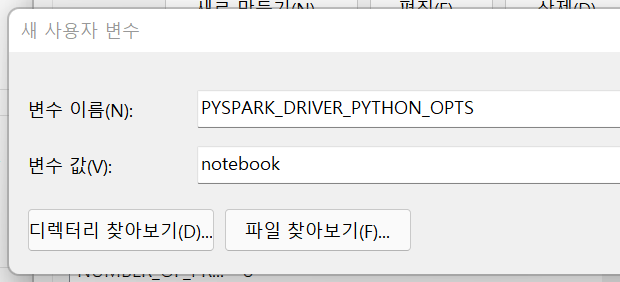

109Create new user variables and set the value.

- PYSPARK_DRIVER_PYTHON ; jupyter

- PYSPARK_DRIVER_PYTHON_OPTS ; notebook